A Gentleman In Moscow is the last show I worked on during my latest stint at Rumble VFX. The reel above contains breakdowns of many of the shots I worked on.

Creating a City

I was initially tasked with creating a reusable Moscow skyline asset. This was inspired by photographs of the 1920s and 1930s. Many of the buildings used were from off the shelf kits. These had textures, but weren’t yet set up for Redshift 3D, nor indeed Houdini ready. The process became much easier after a few models. For example I had a script set up to convert shaders to Redshift ones with the correct names and textures attached. All needed balancing to look approximately the same when brought in to one primary scene.

Photography of the time shows the typical Russian formal gardens in front of the Bolshoi and Metropol. I began the layout with these gardens, working out from there. Much of them are point instanced models of bushes, flowers and grasses with hero shrubs and trees placed by hand. This was in part because in some shots they got in the way and in others they needed adding to so as to give height to the scene.

Paved areas were defined with curves. Stoned edging and cambered road surfaces were added in procedurally along and away from the curves respectively. There was even a tram system for creating recessed metal rails in the road and slightly different cobbles on the ground. A separate one defined the power lines. In one shot I went to the extent of animating the over head lines and the pantograph on the tram, something that is maybe only visible on very large TVs!

Optimisation

A technical challenge with having so many models and lights in shot, was that of memory limits, especially VRAM. To remain quick at rendering Redshift must use less than the available GPU memory. Plants were read off disk at render and most of the buildings were converted to rs proxy files. They load in quickly and could still have their shaders altered globally for one specific pass, the snow.

The snow pass was in addition to the usual beauty with a snow material overriding all the usual ones. It was blended with the beauty using Normal, AO, noise and other custom AOVs in Nuke. This method allowed us to be flexible about the location of snow. As with other projects where I’m setting up a primary setup for a team I had a Nuke script that could show compositors roughly the end result I was aiming at.

Snow on the ground was actually one large grid with its own gentle undulations, pushed up near to buildings, street furniture and so on. It was then roughened in these locations and poly reduced, keeping the detail in these bumpy areas. To add further detail when seen at ground level, the snow grid was worked in to with displacement maps, particularly footprints.

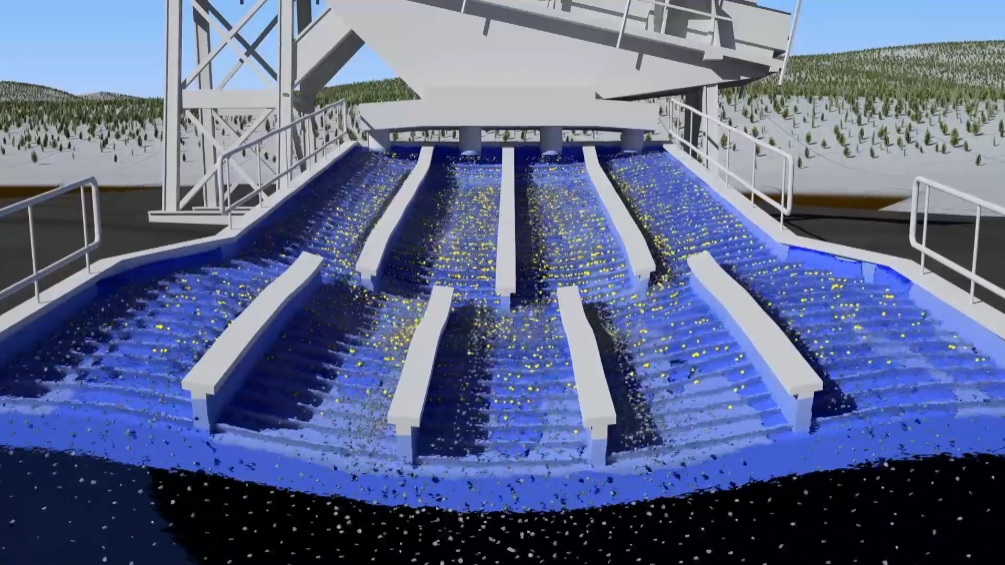

Smashing work

Other tasks on A Gentleman In Moscow included animating a picture frame, shot by the count to alarm the hotel manager. In the plate, the practical gun went off then half a second later the picture swung down! For impact, timing and believability we decided to replace the whole thing post-firing. I made a simple model of the frame, complete with photo, mount board, glass and frame, then I set up a shattering system.

Stills on set gave me a great reference for which bits of glass remained in the frame and where the bullet hole should be. I drew out wobbly lines radiating from the hole and set up an RBD sim in Houdini. I had trouble getting the finesse I wanted from the SOP level shattering system so only used that to break the mesh in the first place, passing that and the constraints to a custom DOPnet. In there I gave the sim a speed limit, some drag and transferred velocity from the picture frame over the first frame or two for pieces within a certain range. This was to assist with the appearance of glass being pushed by the frame as well as the bullet. Anything that shot backwards for any reason was rapidly decreased in size so as to vanish.

A Gentleman In Moscow is now streaming on Paramount+. To see more of my work, check out the other projects!